Why are there different CME requirements in each state?

In the US, each state has control over its continuing medical education (CME) requirements, resulting in some states requiring as few as zero CME credits in states like Indiana and South Dakota to upwards of 50 credit hours per year in Massachusetts and Illinois. The purpose of this system is to ensure that healthcare practitioners are constantly learning in order provide their patients with the best, most up to date care and optimally run their practices.

Traditionally, CME has been a major pain point for all healthcare practitioners – a label which contains physicians, nurses, dentists and more – as CME takes healthcare practitioners from doing what they want, which is caring for patients. In addition to finding relevant and interesting CME courses, keeping track of CME is also a rather cumbersome process which while seemingly tedious, is incredibly important, as HCPs can be audited for their lack of compliance.

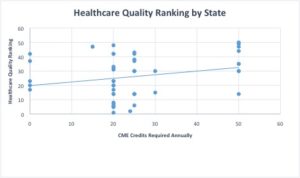

But despite these pain points for healthcare practitioners, CME works right? Yes and no. So ideally, we would expect the states that have stricter requirements for CME to have better healthcare quality metrics and that is partially true.

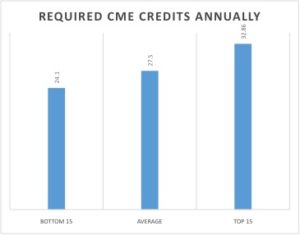

From this graph (each dot is a state), it appears that there is a positive correlation between CME required and healthcare quality ranking. The average physician in the US completes 27.50 credits/year. The fifteen lowest healthcare quality states have an average of 24.10 credits/year and the fifteen highest healthcare quality states have an average of 32.86 credits/year. This data all points to the utility of CME and that increasing the rigor of CME requirements could potentially have a positive effect on healthcare quality (wait for the but).

But there is simply too much variance in this data to argue that rigor of CME requirements is an indicator for healthcare quality! The average healthcare quality rankings for both states that require zero credits (far below the average) and 30 credits or more (above the average) are in the top half of healthcare quality rankings. The average for zero credit states is 24/51 (including Washington DC) and the average for the more rigorous states is 18/51, which while higher, shows that the required CME might not actually have that much of an effect.

We want CME to be the proof that our healthcare system and its quality is reliant on the medical prowess of our healthcare practitioners. However, there are other factors at play. Medical training could be a huge factor in healthcare quality, as physicians who are trained at certain institutions are likely to be more competent and capable. Another issue that could be affecting these numbers is the US reliance on a fee-for-service method of payment, where physicians are paid for each service that they provide. In this model, physicians have an incentive to provide as much care as possible, but since medical outcomes aren’t a factor in this model, they are not incentivized to also provide quality care. This is not to say that all physicians who operate under fee-for-service are these avaricious con-men that we should hide our children from, but that perhaps a move to a bundled payment system would be wise if we want to see improvements in healthcare quality.

But what if the correlation between healthcare quality and CME rigor is true? It would be interesting to see how changing only the CME requirements of a state which consistently gets the same healthcare quality rankings would affect the state’s healthcare quality ranking. This would be a tough experiment to do and control for, but could have vast implications on the future of CME and how it is regulated.

Sources:

Commonwealth Fund

State population info census data

CME requirement data from https://www.acep.org/Continuing-Education-top-banner/CME-Requirements-by-State/